Table of Contents

Authored by:

Richard Rowan-Robinson1, Zhaoyuan Leong1, Nicola Morley1, Noel Vizcaino2, Vasily Bunakov2

1Department of Materials Science and Engineering, University of Sheffield, Sheffield, S1 3JD

2Science and Technology Facilities Council, Scientific Computing Department. Rutherford-Appleton Laboratory. Oxfordshire, OX11 0QX

Download a .pdf copy of this summary report

Download a .pdf copy of the full detail report

Key focus and activity

The main areas of focus for this case study were: the application of Natural Language Processing (NLP) to extract data from the functional magnetic material literature and the architecture required to merge this extracted data with laboratory data to form a resource description framework (RDF) database.

Area of Physical Sciences covered

Condensed Matter: Magnetism and Magnetic Materials

Spintronics

Related PS research areas

Materials Engineering – Metals and Alloys

Materials for Energy Applications

Functional Ceramics and Inorganics

Surface Science

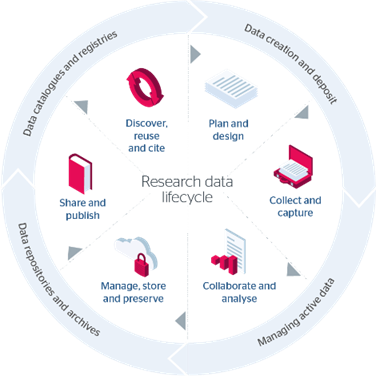

Applicability to the Research Data Lifecycle

Plan & Design: project has been planned around the main proposal objectives

Collect & Capture: data creation and capture from high-throughput experiments; looking at architectures to store data

Collaborate & Analyse: some basic analysis of the data collected

Manage, Store & Preserve: Main aim of the project – determining the best architecture to store both experimental and NLP data sets

Share & Publish: Final reports (summary and activity)

Discover, Reuse & Cite: using NLP, data repositories are being used to discover existing data in the literature and stored with the experimental data

Figure 1 – JISC Research Data Lifecycle Model [1]

Main outputs

The outcomes are split across two parts: first, the application of NLP to extract data from the function magnetics literature subdomains, and secondly, the merging of this data with laboratory data as an RDF database.

Key results

For the NLP extraction, the available open access NLP software were evaluated (see activity report) and two were chosen to be further investigated: ChemDataExtractor and MatScibert, plus a code in Mathematica was written for comparison.

To determine the effectiveness of existing NLP software to extract functional magnetic material data, the following steps were carried out:

- A small corpus of eight texts was created (see activity report appendix table A1)

- Using these texts, we manually annotated them, to extract the composition and magnetic properties. The main three properties we were extracting were saturation magnetisation (Ms), coercive field (Hc) and Curie Temperature (Tc).

- We then ran the two NLP software and our code on the text corpus and collected the results

- Comparing the results of the data extracted using NLP and manually, gave us the effectiveness of the software to data extract magnetic properties.

The below table summarises the effectiveness (Ɛ) of the different codes.

| Paper | Chemdataextractor | MatScibert | Mathematica code | ||||||||||||

| Com | Ms | Hc | Tc | Ɛ % | Com | Ms | Hc | Tc | Ɛ % | Com | Ms | Hc | Tc | Ɛ % | |

| [1] | ✓ | ✓ | ✓ | – | 100 | x | x | x | – | 0 | ✓ | ✓ | ✓ | – | 100 |

| [2] | ✓ | ✓ | ✓ | ✓ | 80* | x | x | x | x | 0 | ✓ | ✓ | ✓ | x | 75 |

| [3] | x | ✓ | – | – | 50 | x | x | – | – | 0 | ✓ | ✓ | – | – | 100 |

| [4] | In part | ✓ | – | – | 75 | x | x | – | – | 0 | ✓ | ✓ | – | – | 100 |

| [5] | x | ✓ | ✓ | – | 66 | x | x | – | 0 | ✓ | ✓ | ✓ | – | 75** | |

| [6] | In part | ✓ | – | – | 75 | x | x | – | – | 0 | ✓ | ✓ | – | – | 75** |

| [7] | ✓ | ✓ | – | – | 100 | x | x | – | – | 0 | ✓ | ✓ | – | – | 75** |

| [8] | ✓ | ✓ | – | – | 100 | x | x | – | – | 0 | ✓ | ✓ | – | – | 100 |

* an additional magnetisation was extracted that didn’t exist in the abstract;**the composition and the saturation magnetisation were both extracted, but weren’t linked together

Further the output data file containing all the results were used as one of the sets of input data files for the RDF database

The second part of the case study was to determine the ease of merging different functional magnetic material datasources, including experimental and literature extracted data. To start with we produced a datamap of measurements following a typical magnetic sample (Figure 2 in the activity report). An additional degree of complexity was added to this data merging, by using combinatorial sputtered films as the test case. These films are grown on 3-inch silicon wafers and contain over 180 different alloy compositions. The relatively large scale of the data output from these experiments provides a good test bed for merging this source with the new data structures. The data merging software has to be able to link different points defined on the wafer (representing unique compositions) for each of the characterisation properties measured, where for example the MOKE data has ~20 different data loops in one file, while the SQUID data is for one specific point on the wafer.

All the data I/O identified from dataflows will have automatically generated associated metadata. Semantic linked data metadata will allow researchers to retain and extend domain terminology while remaining standards compliant. An early sample was provided using W3C DCAT 2 and W3C JSON-LD standards as main pillars. This showed the viability of the proposal and served as a tangible artefact to drive discussions. This serialisation format is ready to be ingested into any RDF aware database. This will open new avenues for sophisticated analytics among other benefits.

Outcomes and Recommendations

The overall outcome is that as a proof concept, both the NLP and data merging tools show promise for applications within material science. Although a number of issues arose during the case study that would have to be addressed if either of the tools were going to be used by material scientists. At the present, the tools available assume prior knowledge of the software, hence anyone coming in from a different discipline, will struggle to use and adapt the tools for their applications. Thus, the main requirements of the infrastructure are:

- User friendly tools for non-developers.

- Better documentation for existing tools and included as metadata.

- FAIR (and separated) data and metadata.

- Most of the rich metadata is automatically generated.

- Rich highly structured metadata.

- Domain infused metadata.

- The metadata is logically interconnected as part of a larger Knowledge Graph.

- Custom hierarchies of datasets. Datasets can be made of other datasets.

- Metadata must be incrementally refined (and expanded if required).

- The metadata is flexible enough to accommodate changes.

- The experiment workflow should also be captured in the metadata.

- I/O parameters for processing modules (beyond the data files) described in the metadata.

- All must be international standards driven, beyond core standards (DCAT 2 and JSON-LD)

- The resulting metadata and its associated data files are uploaded to database and object storage, respectively.

- The common database system holds all metadata (which is networked by design).

- Joint sharing of electronic notebooks for collaboration, to allow groups to develop code etc together.

- Any tools/scripts/notebooks will be part of a common repository shared by the users based on their roles and permissions.

- Incorporate external tools like e.g., NPL software to the process.

- User bound views will be produced from data. E.g., a PDF report will be produced automatically from suitable data.

- Minimal user input: Users will be only requested essential information (not currently known and not deducible by the system).

- The resulting system should hide any data processing complexity. However, showing domain bound complexity is highly desirable.

- Users should be able to query about anything regarding their data and its associated processes.

The benefits for these changes would mean a much wider selection of researchers could use the tools for their research, without having to be experts in the coding for these tools.

Further from the first part of the project, the main outcomes were that the existing NLP software can be used for magnetic materials properties data extraction, once the code has been altered. Promising results were achieved especially for the properties, but there were limitations (see activity report for greater details), which included limited success on extracting alloy compositions correctly, issues around adapting the software to the research field, along with limited documentation for the tools. Further, none of the tools were capable of extracting data from figures. Thus, additional infrastructure would be:

- NLP tools that can extract data from figures.

- tools/software that is specifically developed for research areas or can be easily. adapted by non-experts to work within their research field.

The benefit of these outcomes is foremost that more data can be extracted from publications, as often the majority of the results are presented as data points on a graph, rather than written in the text, and therefore being able to extract this information will increase the data being added to the database. Further, having software/tools that can be simpler to adapt to different research fields, will benefit the whole Materials community, as it allows for different disciplines to use NLP for database building.

Full detail report

The full detail reported produced by this case study can be found at: Full Report Link